Monday, 9:42 AM. Our churn-risk model suddenly looked heroic. The dashboard said precision jumped overnight, and two people dropped a 🎉 in Slack before coffee.

By 11:15 AM, support was forwarding angry messages from customers we had marked “high risk” even though they had just renewed. The model wasn’t drifting. Our data timeline was.

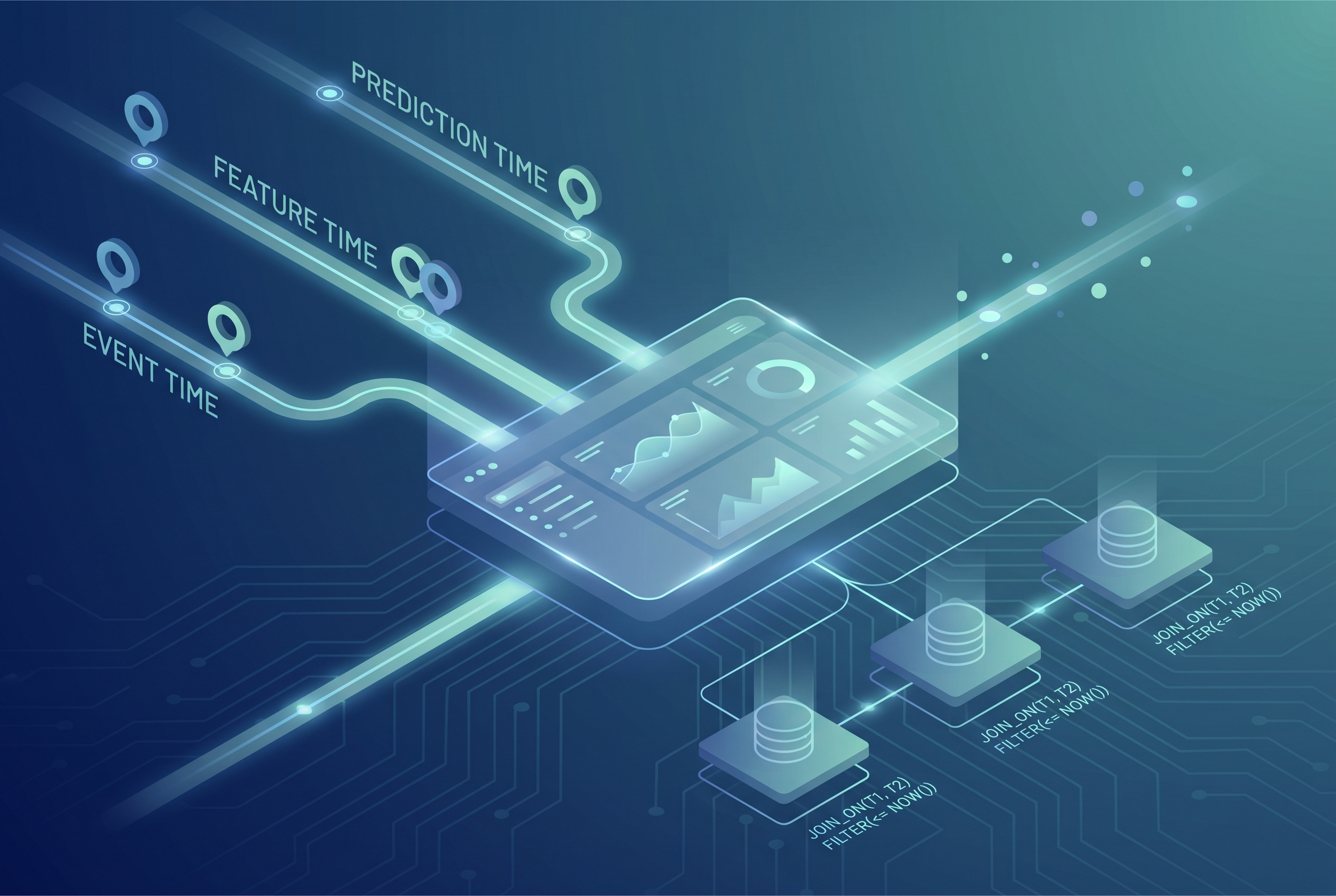

What broke us was not a bad algorithm. It was a quiet mismatch between event time, feature freshness, and training cutoffs. In plain terms, we leaked future information into training and then asked the model to predict the past in production. This post is a practical 2026 playbook for point-in-time joins, better data leakage in machine learning hygiene, and reliable feature pipelines using dbt snapshots plus a simple feature freshness SLO.

The real bug: one entity, three clocks

Most teams track one timestamp and call it done. In production, you usually need three:

- Event time: when the user action actually happened.

- Ingest time: when your warehouse first saw the record.

- Prediction time: when you asked the model to decide.

If features are joined on ingest time instead of event time, or if your labels are computed with data that was not available at prediction time, you create optimistic offline metrics and disappointing real behavior. That exact trust gap is what we discussed in our SQL reliability article, where “correct query” and “correct moment” are different problems (query plan drift incident).

A practical point-in-time join pattern (without fancy platform lock-in)

You can implement a robust point-in-time join in many stacks. The example below uses DuckDB because it is explicit and easy to reason about. The same mental model applies if your final run happens in BigQuery, Snowflake, or Spark.

-- training_events: one row per training example

-- columns: user_id, event_ts, label

-- feature_snapshots: slowly changing feature values

-- columns: user_id, feature_ts, plan_tier, tickets_7d, nps_30d

WITH training_events AS (

SELECT * FROM read_parquet('s3://ml/training_events_2026_04.parquet')

),

feature_snapshots AS (

SELECT * FROM read_parquet('s3://ml/feature_snapshots_2026_04.parquet')

),

joined AS (

SELECT

e.user_id,

e.event_ts,

e.label,

f.plan_tier,

f.tickets_7d,

f.nps_30d,

f.feature_ts

FROM training_events e

ASOF LEFT JOIN feature_snapshots f

ON e.user_id = f.user_id

AND e.event_ts >= f.feature_ts

)

SELECT *

FROM joined

WHERE feature_ts IS NOT NULL

-- freshness guardrail: feature must be at most 24h old at prediction event

AND event_ts - feature_ts <= INTERVAL '24 hours';This pattern buys you two things:

- You only join feature values known as of the prediction timestamp.

- You make freshness a contract, not an afterthought.

The tradeoff is obvious: stricter freshness means fewer usable training rows during outages or delayed backfills. Relax freshness and you gain coverage but accept staler context. Pick the boundary based on product risk, not convenience.

Where dbt snapshots fit, and where they do not

dbt snapshots are great when source rows mutate and you need historical state. They are not a magic point-in-time engine by themselves, but they give your joins trustworthy history.

{% snapshot customer_status_snapshot %}

{{

config(

target_schema='snapshots',

unique_key='customer_id',

strategy='timestamp',

updated_at='updated_at',

dbt_valid_to_current='9999-12-31'

)

}}

select

customer_id,

plan_tier,

is_delinquent,

updated_at

from {{ source('billing', 'customer_status') }}

{% endsnapshot %}

-- Later in a model, ensure prediction event lands inside validity window:

select

p.customer_id,

p.prediction_ts,

s.plan_tier,

s.is_delinquent

from {{ ref('prediction_events') }} p

left join {{ ref('customer_status_snapshot') }} s

on p.customer_id = s.customer_id

and p.prediction_ts >= s.dbt_valid_from

and p.prediction_ts < s.dbt_valid_to;Why this works well:

- Timestamp-based snapshots handle schema evolution better than manually enumerated column checks in many teams.

- You can reason about “what was true then” using validity windows.

Where teams trip: snapshotting every table by default. Snapshot only mutable, prediction-relevant dimensions. Everything else becomes cost and complexity with little model gain.

The leakage test we now run before every model train

Offline metrics can still lie even with decent joins. We now run one cheap leakage sanity check:

- Train once with your normal features.

- Train again after shifting all feature timestamps forward by +24h (a deliberate “future leak”).

- If metric improvement is tiny, your original pipeline is probably clean.

- If metric improvement is huge, your baseline likely already leaks future signal.

It is not perfect, but it catches embarrassing mistakes fast. This mirrors scikit-learn’s guidance to separate fit and transform boundaries carefully so test-time information does not contaminate training.

How this connects to mobile and API reliability work

If this sounds familiar, it should. In mobile sync, we already protect state correctness with ETags and idempotency keys (mobile sync incident write-up). In APIs, we use explicit backpressure and retry contracts (ASP.NET rate limiting runbook).

Data science pipelines need the same discipline: time contracts, freshness budgets, and failure behavior that is visible, not assumed.

Troubleshooting: when your point-in-time pipeline still behaves strangely

1) Symptom: offline AUC is high, online precision drops in 48 hours

Likely cause: training used “future-known” feature rows from late-arriving batches.

Check: sample 200 training rows and verify that every feature timestamp is ≤ prediction timestamp.

Fix: enforce join predicate on event time and reject rows violating freshness SLO.

2) Symptom: lots of NULL features after enforcing point-in-time joins

Likely cause: your feature TTL is tighter than real pipeline latency.

Check: histogram of (prediction_ts – feature_ts) for last 7 days.

Fix: either improve ingestion latency or widen TTL for non-critical features only. Do not widen globally without risk review.

3) Symptom: model retrains are nondeterministic across reruns

Likely cause: source tables are mutable and no snapshot/version pin is used.

Check: rerun training with same code and compare training row hashes.

Fix: pin source versions (for example, snapshot tables or warehouse time-travel reads) and record them with model artifacts.

FAQ

Q1) Are point-in-time joins only needed for feature stores like Feast?

No. Feature stores make them easier, but the requirement exists in any training pipeline. Feast’s historical retrieval API simply formalizes the same principle.

Q2) Should I snapshot every mutable table with dbt?

Usually no. Snapshot the dimensions that materially affect predictions or audits. Blanket snapshotting increases spend and slows change reviews.

Q3) Can warehouse time travel replace snapshots completely?

Sometimes, not always. Time travel is excellent for restoring/querying prior table states, but retention windows and table-level semantics may not cover every long-horizon reproducibility need. Many teams combine both.

Actionable takeaways for this week

- Define a written feature freshness SLO per model tier (for example: gold ≤2h, silver ≤24h).

- Add an automated “future timestamp” data-quality test in CI before model training starts.

- Use point-in-time join predicates explicitly, never inferred by convenience columns.

- Snapshot only high-impact mutable dimensions and document why each snapshot exists.

- Version and log data cut identifiers with each model artifact for reproducible incident reviews.

Sources reviewed

- dbt docs: Snapshots and timestamp strategy best practices.

- Feast docs: Point-in-time joins and TTL behavior in historical retrieval.

- scikit-learn docs: Data leakage pitfalls and fit/transform boundaries.

- BigQuery docs: FOR SYSTEM_TIME AS OF and historical table recovery.

- Related 7tech context: trustworthy analytics under acceleration.

If your model metrics and product outcomes disagree, assume clock mismatch before model weakness. In my experience, fixing timeline integrity often improves trust faster than another week of hyperparameter tuning.

Leave a Reply