At 8:40 on a Wednesday morning, our analytics Slack channel lit up with a quiet kind of panic. A revenue QA notebook that had been stable for months suddenly disagreed with the warehouse snapshot. No deploy had happened. No pipeline was red. But one number moved, and then every downstream chart looked “almost right,” which is the most dangerous kind of wrong.

The root cause was not dramatic. A helper function returned a subset, someone updated that subset in place, and a second object silently changed with it. We had seen this class of bug before in pandas, but it used to be inconsistent enough that teams learned to “just be careful.” With pandas 3.0, that era is over, and honestly, that is good news.

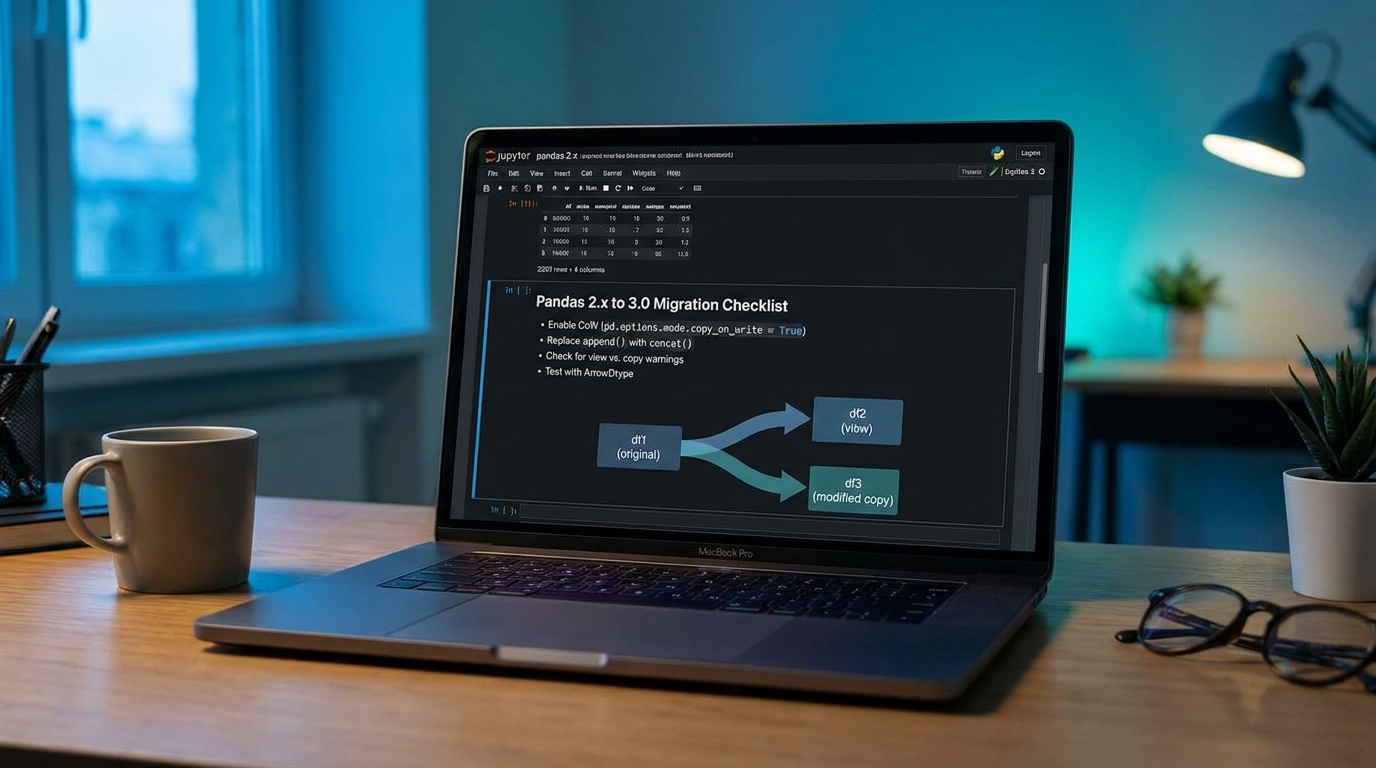

This guide is a field-tested pandas copy-on-write migration playbook for teams that run real workloads, not toy notebooks. We will cover what changes in pandas 3.0, how to migrate without stalling delivery, and where teams usually trip during rollout.

The two behavior shifts that matter most in production

From the pandas docs, Copy-on-Write (CoW) is now default in pandas 3.0, and chained assignment is no longer a “sometimes works” pattern. You should treat this as a reliability upgrade, not just a syntax cleanup.

- CoW default: mutations should only affect the object you mutate.

- Chained assignment breaks by design: patterns like

df["col"][mask] = valueare no longer valid behavior. - Read-only NumPy views: some arrays obtained from pandas objects are non-writeable unless explicitly copied.

- String dtype migration: pandas 3.0 defaults string inference to the new

strdtype behavior (Arrow-backed when available), which can expose object-dtype assumptions.

If you have ever debugged metric drift where code “looked fine,” this is a net win. The cost is that old implicit patterns become explicit refactors.

A migration plan that does not freeze your roadmap

Phase 1: Turn warning noise into a migration backlog

On pandas 2.2+, run CI with CoW warning mode enabled. Do not fix everything blindly. Triage by data criticality: billing, attribution, and executive dashboards first.

# ci_cow_probe.py

import warnings

import pandas as pd

pd.options.mode.copy_on_write = "warn"

# Turn CoW behavior changes into visible CI signals

with warnings.catch_warnings(record=True) as caught:

warnings.simplefilter("always")

# import and run your smoke-level transforms

from analytics.smoke import run_smoke_suite

run_smoke_suite()

important = []

for w in caught:

msg = str(w.message)

if "ChainedAssignment" in msg or "copy_on_write" in msg:

important.append(msg)

if important:

print("n".join(f"[COW] {m}" for m in important[:50]))

raise SystemExit("Failing CI: Copy-on-Write migration warnings detected")The trick is scope. Start with smoke transforms that cover high-impact business logic. Expand weekly instead of trying to perfect all notebooks in one sprint.

Phase 2: Refactor unsafe writes into single-step intent

Most breakages are straightforward: collapse chained operations into a single .loc or rewrite inplace mutations as explicit assignment. The behavior becomes easier to reason about and review.

# BEFORE: ambiguous or broken with CoW

orders = df[df["country"] == "IN"]

orders["net"] = orders["gross"] - orders["tax"] # can trigger CoW issues

df["status"][df["latency_ms"] > 3000] = "slow" # chained assignment

df["channel"].replace("fb", "meta", inplace=True) # inplace on selected column

# AFTER: explicit and CoW-compliant

mask_in = df["country"].eq("IN")

df.loc[mask_in, "net"] = df.loc[mask_in, "gross"] - df.loc[mask_in, "tax"]

df.loc[df["latency_ms"].gt(3000), "status"] = "slow"

df["channel"] = df["channel"].replace("fb", "meta")Notice the tradeoff here. The refactor may look slightly longer, but the mutation target is explicit, and reviewer ambiguity drops hard.

Phase 3: Audit dtype assumptions before pandas 3.0 cutover

The pandas 3.0 string migration can break code paths that check dtype == "object" or rely on mixed-type string columns. Replace brittle checks with is_string_dtype, and use pd.isna instead of checking None directly.

This is also where NumPy views vs copies matters. Teams that mutate arrays returned from to_numpy() should decide intentionally: either copy before mutation or accept writeability override risk with clear ownership.

Where this fits in your broader reliability stack

CoW migration is not isolated cleanup. It aligns with the same engineering principle behind safer rollouts and verifiable analytics:

- Data correctness workflows pair well with our SQL validation playbook: human-verified analytics checks.

- Guardrails mindset matches Python automation safety patterns: guardrailed automation design.

- CI enforcement belongs with deployment integrity controls: workflow integrity in CI/CD.

- And if your team mixes stream and batch ownership, connect this with outcome-based service reliability: backend drift resilience.

Troubleshooting: what breaks first (and fast fixes)

1) “My assignment used to work, now values do not change”

Cause: chained assignment or mutation on a temporary object.

Fix: replace with single-step .loc[row_mask, col] write on the original DataFrame.

2) “NumPy array from pandas is read-only”

Cause: CoW protects shared memory views.

Fix: use arr = ser.to_numpy(copy=True) before mutation. Only set arr.flags.writeable = True when you fully own lifecycle and accept side effects.

3) “String column checks started failing after upgrade”

Cause: code assumes object dtype for text.

Fix: use pd.api.types.is_string_dtype(series.dtype), and avoid logic tied to exact sentinel values like None.

4) “Memory increased after refactor”

Cause: extra intermediate references keep shared blocks alive longer.

Fix: reuse variables intentionally, and avoid unnecessary branching copies in hot loops. Measure with realistic dataset sizes, not tiny notebook samples.

Tradeoffs you should explain to stakeholders

You are trading short-term refactor time for long-term predictability. In practical terms:

- Pros: fewer hidden side effects, cleaner reviews, safer concurrent development across notebooks and services.

- Cons: migration noise, some rewrites of legacy idioms, and potential short-lived performance regressions if you accidentally keep too many references alive.

- Neutral but important: this is not just “new pandas syntax.” It is a semantic contract change around mutability.

If your team reports business metrics or financial summaries, this trade is almost always worth it.

FAQ

Q1) Do we need to migrate every notebook before upgrading to pandas 3.0?

No, but you should migrate critical paths first. Use warning mode in pandas 2.2+ to identify high-risk flows, then gate 3.0 rollout by data domain (finance, growth, ops).

Q2) Is Copy-on-Write slower?

Not necessarily. CoW can improve average memory and performance by deferring copies, but real outcomes depend on your reference patterns. Benchmark representative jobs, not isolated micro-snippets.

Q3) Should we force everything to Arrow-backed strings immediately?

Not blindly. Validate integration points first (serialization, UDF boundaries, third-party libraries). Adopt pandas 3.0 string dtype intentionally, with tests around missing-value behavior and type checks.

Actionable takeaways for this week

- Enable CoW warning mode in CI for your top 10 business-critical transforms.

- Ban chained assignment in review guidelines and lint templates.

- Replace

dtype == "object"checks with robust string-dtype detection. - Add one regression test that validates outputs before and after pandas 3.0 upgrade for the same snapshot input.

- Document mutation rules in your analytics style guide so new contributors do not reintroduce old patterns.

If you do only these five steps, you will cut the riskiest upgrade failures by a lot, and your data team will spend less time explaining mysterious drift to everyone else.

Sources reviewed: pandas Copy-on-Write guide, pandas 2.2 release notes (3.0 behavior preview), pandas 3.0 string migration guide, and NumPy copies-vs-views documentation.

Leave a Reply